In addition to this blog post, we discussed our ICLR 2020 experience in a Numenta Research Meeting. For those interested, you can watch the video of that discussion here.

On April 26-30, we attended the 2020 edition of the ICLR, the International Conference in Learning Representations. ICLR is a new but respected conference in the deep learning community. Several interesting papers in both theory and application were introduced at the conference.

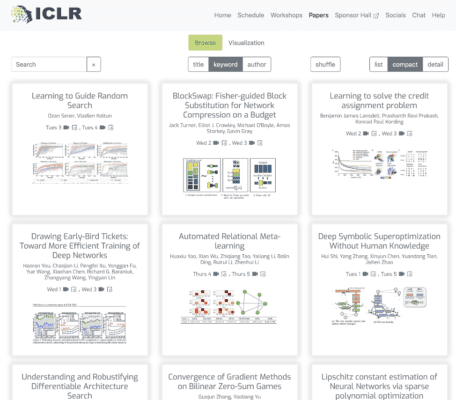

The conference was originally planned for Addis Ababa, capital of Ethiopia, in a brilliant move by its organizers to solve the visa issue for researchers who had their visa denied at last year’s conference, and to promote research in African countries. Unfortunately, COVID-19 prevented the conference from happening as planned. Nonetheless, its organizers undertook an herculean effort in a very short time frame and successfully transformed ICLR into an innovative online conference. Highlights for the well organized papers repository, with each paper being linked to a 5 minutes video, slides and reviews. Search tools and visualization allowed easy browsing through the topic of your choice.

In this blog post we briefly review a few papers we found particularly interesting. We organized our trip report into three topics matching some of the current research at Numenta: neuroscience, deep learning theory and pruning and sparsity.

Neuroscience

Because the conference is traditionally aimed at the deep learning community, neuroscience and related sciences don’t usually have a lot of space in ICLR. There were, however, many interesting talks touching the field, mostly in the workshop Bridging AI and Cognitive Science.

Our favorite was a brilliant talk by MIT roboticist Leslie Pack Kaelbling, who also had a larger talk at the main conference. Leslie laid down in a very candid fashion how roboticists approach building embodied intelligent systems, what lessons cognitive scientists could learn from this perspective, and what roboticists could in turn learn from research in neuroscience and cognitive science.

Intelligent behavior, according to Leslie, has to be flexible, yet robust; purposeful, but adaptable, and able to plan in short and long term horizons. Anyone who has ever worked with robotics knows it is a lot harder than it seems at first glance. Learning how the brain can do things like encode space, deal with multiple time scales, model other agent’s behaviors and avoid fruitlessly repeating the same actions would help to improve our current AI systems.

Another interesting presentation was Model Zoology and Neural Taxonomy for better characterizing mouse visual cortex which further explores the relationship between neural activity and activations of deep neural networks. Conwell et al. analyze a two photon calcium imaging dataset from more than 30,000 neurons in the mouse visual cortex, and compare it to over 50 different neural network architectures in 21 vision tasks. The best performance was achieved in 2-d segmentation scores, but the low r-squared values across all tasks indicate there is still a lot of unexplained variance that can’t be predicted by current neural network models.

In the same line, a paper in the main conference titled Emergence of functional and structural properties of the head direction system by optimization of recurrent neural networks, by Cueva et al., show that not only neural activity but also anatomical properties of neural circuits could be derived by neural networks. They train a RNN to estimate head direction through integration of angular velocity, mimicking the head direction circuit of a fruit fly, and show that compass neurons and shifter neurons emerge naturally as a result of training. Although arguments can be made about the correct way to interpret the results of these experiments, and whether it is a valid hypothesis that there is a correspondence between artificial neural networks and our neural pathways, the work is certainly an interesting addition to this literature. It lays the foundation for future research in more complex neural circuits.

Deep Learning Theory

Deep learning theory is where ICLR shines. We saw some relevant work on tooling and highlight two here. BackPACK: Packing more into Backprop, by Dangel et al., introduces a library built on top of Pytorch that efficiently computes additional quantities that are byproducts of the backward passes. This includes, for example, individual gradients of the minibatch, estimates of the variance or second moment, or approximations of second-order information. Many interesting models rely on these extra measures for more complex tasks such as compression or continual learning, and having a standard package that makes it easy to access these additional metrics is a great time saver in implementing those. We hope to see this library adopted by the PyTorch community and continuously grow to include new and relevant metrics.

The other interesting new library is Neural Tangents which “allows researchers to define, train, and evaluate infinite networks as easily as finite ones”. Infinite width neural networks are an important theoretical concept for neural network analysis, but have also recently been successfully used in practical applications. The stellar group of researchers behind this tool (with already 929 stars on github at the time of this post) is certainly predictive of its success.

Moreover on deep learning theory, the paper Fantastic Generalization Measures and Where to Find Them does a formidable effort of evaluating 40 generalization measures over more than 2,000 models in two datasets, with the goal of uncovering potential causal relationships between the measures and generalization. This work presents a lot of surprising findings including, for example, that norm-based measures (such as L2 norm, widely used for regularization) have little or even negative correlation with generalization. The most promising measures identified are sharpness-based, which measure sensitivity of the loss over the entire training set to perturbations in the models parameters, and optimization-based, such as gradient noise or speed of optimization. This undertaking lays the groundwork for subsequent research on how we can leverage these measures to further improve generalization and will impact research in years to come.

Pruning and Sparsity

On pruning and sparsity, there were several mentions of the Lottery Ticket Hypothesis, one of the recipients of ICLR’s 2019 best paper award. Comparing Rewinding and FineTuning in Neural Network Pruning, by Renda, Frankle and Carbin, further explores the notion of rewinding weights to their original value after pruning. They show that rewinding the weights to intermediate positions between the first and last epochs outperforms fine-tuning, the classical strategy in iterative pruning. Furthermore, rewinding only the learning rate is already enough to outperform fine-tuning, and remains competitive with weight rewinding while being less memory intensive. Based on these findings, the authors propose a very simple iterative algorithm that only involves training, pruning, and retraining with learning rate rewinding. It is an empirical paper, with lots of well reported experiments, and shows state of the art results compared to iterative pruning approaches.

Another very interesting article is Drawing Early-Bird Tickets: Toward More Efficient Training of Deep Networks. You et al. show there is no need to train a network until convergence to identify the lottery ticket (the pruned mask). In the algorithm proposed, at each epoch they calculate the mask using a pruning method, such as magnitude based pruning at a target ratio, and compare the masks taken at each epoch using Hamming distance. If the distance between the masks is below a threshold after several timesteps, the early-bird ticket is identified and training can be stopped (meaning it is not fruitful to continue training since the masks are not likely to change much after that point). This works even with low precision training, making it even more efficient to go through the iterative pruning process. Note that this can be easily combined with the learning rate rewinding algorithm proposed in the previous paper, making it more efficient.

On sparse activations, Enhancing Adversarial Defense by k-Winners-Take-All, by Xiao et al., does a very interesting analysis on how k-Winners-Take-All can be used to defend against backward pass differentiable approximation attacks. A common defense against adversarial attacks is to obfuscate gradients, but these defenses can be overcome by finding an approximation g(x) ~ f(x) that is differentiable. However, discontinuities introduced by k-Winners nonlinearities can largely prevent these attacks while not impacting the network trainability or accuracy. Previous work by Ahmad and Scheinkman show that k-Winners can make a neural network robust to noise, and this work is an interesting addition showing that the achieved robustness extends to adversarial attacks.

While the lottery ticket papers require less and less training cycles, we also see improvements in sparse training methods restricted to only one cycle. Dynamic sparse methods may involve either pruning a dense network gradually to a target sparsity or starting with a sparse network and reallocating connections throughout training at fixed intervals. Both methods were represented with thought provoking results. One of the most notable papers was Rigging the Lottery: Making all Tickets Winners, rejected for the main conference due to lack of theoretical results. The paper details a bag of tricks to improve accuracy, but the main driver of the approach is to prune weights by magnitude and to add back weights according to the gradients of the pruned network. It is empirically rigorous and overall the best performing, and we hope to see it published soon in a major conference.

In a similar vein, we also saw enhancements to methods involving pruning before training – also termed “foresight pruning”. The previous standard was set by the Single-Shot Network Pruning (SNIP) algorithm which prunes an initialized network according to a connection sensitivity metric. This algorithm effectively identifies which weights will least impact the change in the loss if they are removed. In contrast, the paper Picking Winning Tickets before Training by Preserving Gradient Flow points out that it’s suboptimal to preserve the loss of untrained network. Instead they propose Gradient Signal Preservation (GraSP). Essentially, by preserving gradient flow, we can find a pruned network that will receive larger gradient norms during the first step of training. What’s interesting about these foresight pruning approaches is their incorporation of the characteristics of the loss function. Many dynamic pruning approaches attempt to preserve certain aspects of the loss, at least indirectly. It would be intriguing to see more sophisticated prune-and-grow methods that better leverage these properties of the loss landscape.

—–

If you liked our review, check out our research meeting where we briefly talk about our experience at 2020 ICLR online edition, and discuss some of these papers and others. If you spot any mistakes or would like to discuss something in further detail, leave your comment below or drop us an email, we would love to hear from you.

Lucas Souza – lsouza@numenta.com

Michaelangelo Caporale – mcaporale@numenta.com