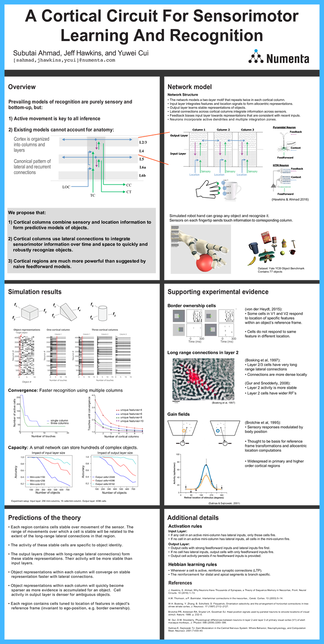

In this poster, we describe a network model of a cortical circuit that learns sensorimotor representations of objects. Prevailing computational models of recognition are purely feed-forward. This passive view is inconsistent with anatomical and physiological experiments, which suggest that active movement is integral to every cortical region, including primary and secondary sensory areas.

Extending previous work, this cortical circuit integrates motor representations and feed-forward sensory information to build predictive models of objects.

We propose that:

- Cortical columns combine sensory and location information to form predictive models of objects.

- Cortical columns use lateral connections to integrate sensorimotor information over time and space to quickly and robustly recognize objects.

- Cortical regions are much more powerful than suggested by naive feedforward models.