In this newsletter, we have an exciting announcement, book updates, events, and more.

Numenta Deep Learning Technology Demonstration: 100x Performance Acceleration

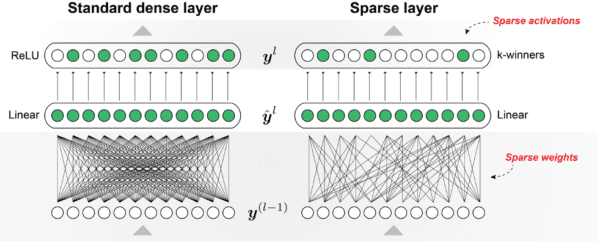

We recently announced that we achieved greater than 100x performance improvements on inference tasks in deep learning networks without any loss in accuracy, using sparse-sparse implementations. Previously, we announced a 50x speed-up using a sparse-dense implementation, which takes full advantage of sparse weights but not sparse activations. For this demonstration, we leveraged both aspects of brain sparsity to create a sparse-sparse implementation.

Our Sparse-Sparse Approach: Fast AND Accurate

Sparsity in neural networks has become increasingly popular over the last few years, but achieving significant performance improvements has been difficult. Techniques vary greatly in sophistication and today’s hardware is designed for dense computations. Our approach to sparsity, a key component of the Thousand Brains Theory, illustrates the possibility of constructing extremely sparse networks with comparable accuracy that run on today’s hardware. Using sparse weights and sparse activations yields multiplicative effects that enable performance improvements an order-of-magnitude larger than typically achieved.

Sparsity is only the beginning of our roadmap on building efficient, intelligent machines. As we continue to implement more of the Thousand Brains Theory in algorithms, we expect to see additional benefits in generalization, robustness, and sensorimotor behavior.

To learn more about how our sparse-sparse implementations generate performance improvements, read our white paper and blog post about the announcement.

Conversations on A Thousand Brains – Video Series

Last month we launched a new video series called ‘Conversation on A Thousand Brains’ that aims to extend the dialogue that our co-founder Jeff Hawkins’ new book, A Thousand Brains, has generated across different fields. To kick off this series, we talked to Dr. Michael Riendeau and two of his students from Eagle Hill School on the possible pedagogical implications of the ideas in the book. You can find the video recording here and a follow-up Q&A with Dr. Riendeau here. If you’re interested in discussing how the ideas in the book might apply to your area of expertise, email us.

A Thousand Brains Media Coverage and Virtual Tour

The release of A Thousand Brains has kept Jeff busy with his virtual book tour. To see the roundup of our favorite articles and appearances, including the latest episode of Guy Kawasaki’s Remarkable People Podcast, check out our Select Media Coverage page.

You can also view the recordings from several talks, including “How Your Brain Understands the World and Why It Sometimes Gets It Wrong” and our recent Brains@Bay meetup “A Thousand Brains: a fireside chat” between Jeff and our Senior AI Researcher Lucas Souza. Lucas asked Jeff about the main aspects of the Thousand Brains Theory and how we can incorporate those ideas into existing learning algorithms.

Upcoming Events

➤ BAAI Conference Keynote – June 1, 2021 6:30pm PDT

Jeff is a keynote speaker at the 2021 Beijing Academy of Artificial Intelligence Conference. He will discuss the key components of the Thousand Brains Theory and his insights on the current and future AI landscape.

➤ Brains@Bay: Grid Cells – June 7, 2021 9:30am PDT

At our June Meetup, we will discuss grid cells and how this topic influences the study and development of machine learning algorithms. We are excited to have Marcus Lewis (Numenta), James Whittington (University of Oxford), and Kimberly Stachenfeld (DeepMind) present their latest work and insights. Reserve your spot here. Visit our YouTube channel for videos of past meetups, events, and research meetings.

➤ CogX Keynote Q&A – June 14, 2021 11:00am PDT

CogX is a global festival of AI and emerging technology that brings together 100,000 people both in-person and online. Jeff will talk with moderator Azeem Azhar to answer questions about Numenta’s research and its impact on the future of AI.

GLOM vs Thousand Brains Theory

We’ve gotten several questions lately as to the difference between Geoffrey Hinton’s GLOM model and Numenta’s Thousand Brains Theory. In this blog post, our Marketing Associate Charmaine Lai highlights the main commonalities and differences at a high level.

Follow us on Twitter to make sure you don’t miss any updates on company and book-related events. Thank you for continuing to follow Numenta.

Christy Maver

VP of Marketing